Could the Hunt for Hubble's Constant Overturn the Standard Model of Cosmology?

Cosmology, we have a problem: Two methods that scientists use to measure the expansion of the universe produce different answers, and astronomers aren't quite sure what's going on. A recent episode of NASA's ScienceCasts video series explored this conundrum.

Since 1929, scientists have known that the universe is expanding at a rate dictated by the so-called Hubble constant, or H0, named after U.S. astronomer Edwin Hubble. He and his colleagues realized that, when observing distant galaxies, the farther away in the cosmos they looked, the faster the galaxies were moving away from Earth. They did this by measuring the "redshift" of light coming from those galaxies, meaning the degree to which the light is stretched by the object's motion. The longer the redshift, the farther away a galaxy is from Earth, and therefore the faster it is moving.

The Hubble value is considered a fundamental constant, just like the speed of light, but scientists can calculate its value only by measuring other phenomena in the universe, according to the video. [Celestial Photos: Hubble Space Telescope's Latest Cosmic Views]

Measuring supernovas

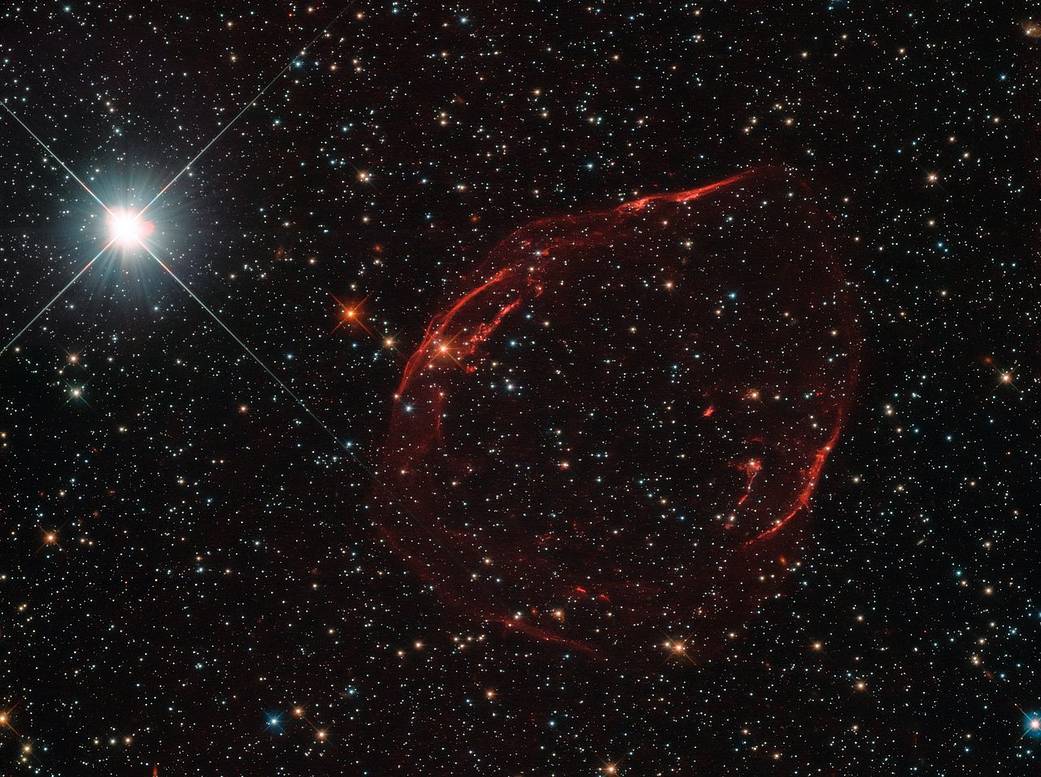

One way astronomers calculate the Hubble constant is to look out for type 1a supernovas that randomly pop off in other galaxies. These stellar explosions begin with white dwarf stars, which are actually very dense nuggets of material left over after stars like Earth's sun run out of fuel and collapse, according to the video. Such a white dwarf star steals gas from a binary partner, and as the gas accumulates on the white dwarf's surface, the star reaches a certain threshold (known as the Chandrasekhar limit) and then collapses, detonating as a supernova.

Because these types of supernovas all generate approximately the same amount of light no matter where they are in the universe, astronomers can use them to measure cosmic distances. (Their distance from Earth determines the difference between their actual brightness and their apparent brightness as seen from Earth.) Objects that act as very precise cosmic distance markers — called "standard candles" — and type 1a supernovas are used to pinpoint the locations of their host galaxies. This, in turn, helps scientists measure a value for the Hubble constant. As astronomical methods have improved, these measurements have become more precise.

Measuring the Big Bang's afterglow

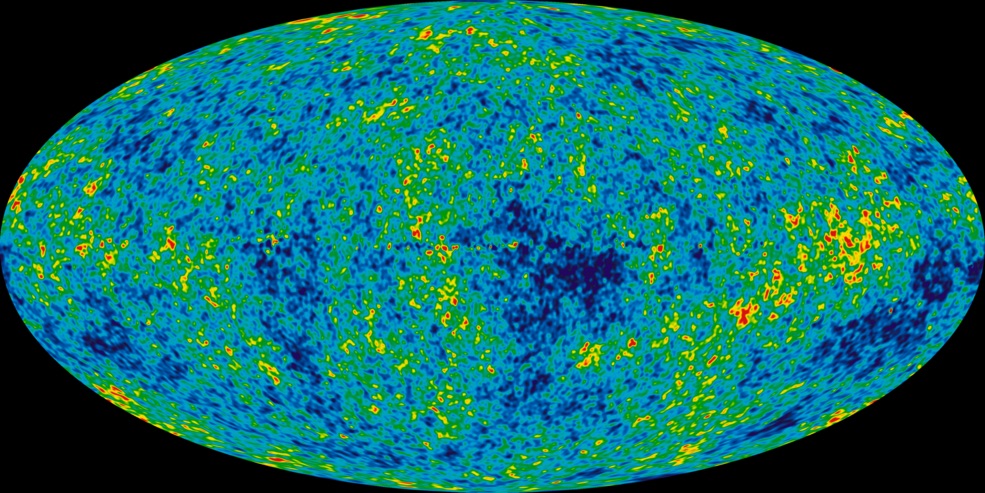

Another way to calculate the Hubble constant is to measure the afterglow of the Big Bang, a phenomenon called the cosmic microwave background, or CMB. As scientists have gotten better at observing the CMB through advances in technology and techniques, they've been getting a better understanding of this ghostly glow.

Here's how scientists have tried to use the CMB to measure the expansion of the universe: They make use of the fact that shortly after the Big Bang, 13.8 billion years ago, as the soup of superheated particles (known as a plasma) started to cool, this material emitted photons, leaving an indelible mark on the outermost edge of the observable universe. Using space telescopes like NASA’'s NASA's Wilkinson Microwave Anisotropy Probe (WMAP) and Europe's Planck space telescope, scientists can observe minuscule wobbles in the temperature of the CMB. These "anisotropies" help researchers understand some of the most perplexing mysteries of the universe, such as the distribution of dark matter and dark energy. The wobbles can also be used to calculate H0 by comparing these precise observations with theoretical models of how the universe has expanded and evolved.

Breaking space news, the latest updates on rocket launches, skywatching events and more!

The problem

The values for the Hubble constant found using these two methods have been in rough agreement. But as observational methods have become more precise, the discrepancy between the two has become more pronounced, researchers said in the video.

Using the Hubble Space Telescope to measure the light from a number of type 1a supernovas, a 2001 study revealed an 8 percent discrepancy in the value for the Hubble constant when compared with the method using precise measurements of the CMB. In other words, the Hubble Space Telescope recorded a faster rate of universal expansion than CMB measurements did. For the Hubble constant to be a fundamental constant, it must be, well, constant, no matter how it's measured.

This active area of research is forcing scientists to ponder whether the assumption that these two methods should agree is wrong, according to the video. Scientists hypothesize that maybe there's a new kind of particle in the universe that is skewing the results of one of the methods.

Or perhaps something significant in the universe has changed over time, which would produce a different result from the CMB (which measures the expansion long ago) and the supernova method (which measures the expansion more recently). For example, perhaps the properties of dark matter — a mysterious material that makes up about 80 percent of the matter in the universe — change with time.

But, if this is the case, it could mean that the standard model of cosmology — the basic description of the universe that physicists agree on — is not complete (or is even wrong) and we need to develop a new model that better describes these observations.

Follow Ian O'Neill @astroengine. Follow us @Spacedotcom, Facebook and Google+. Original article on Space.com.

Join our Space Forums to keep talking space on the latest missions, night sky and more! And if you have a news tip, correction or comment, let us know at: community@space.com.

Ian O'Neill is a media relations specialist at NASA's Jet Propulsion Laboratory (JPL) in Southern California. Prior to joining JPL, he served as editor for the Astronomical Society of the Pacific‘s Mercury magazine and Mercury Online and contributed articles to a number of other publications, including Space.com, Space.com, Live Science, HISTORY.com, Scientific American. Ian holds a Ph.D in solar physics and a master's degree in planetary and space physics.