Planet Hunting to Sky Surveys, Astronomy and Statistics Realign (Op-Ed)

G. Jogesh Babu is director of the Center for Astrostatistics at Penn State, and Eric Feigelson is the center's associate director and professor of astronomy and astrophysics at Penn State. The authors contributed this article to Space.com's Expert Voices: Op-Ed & Insights

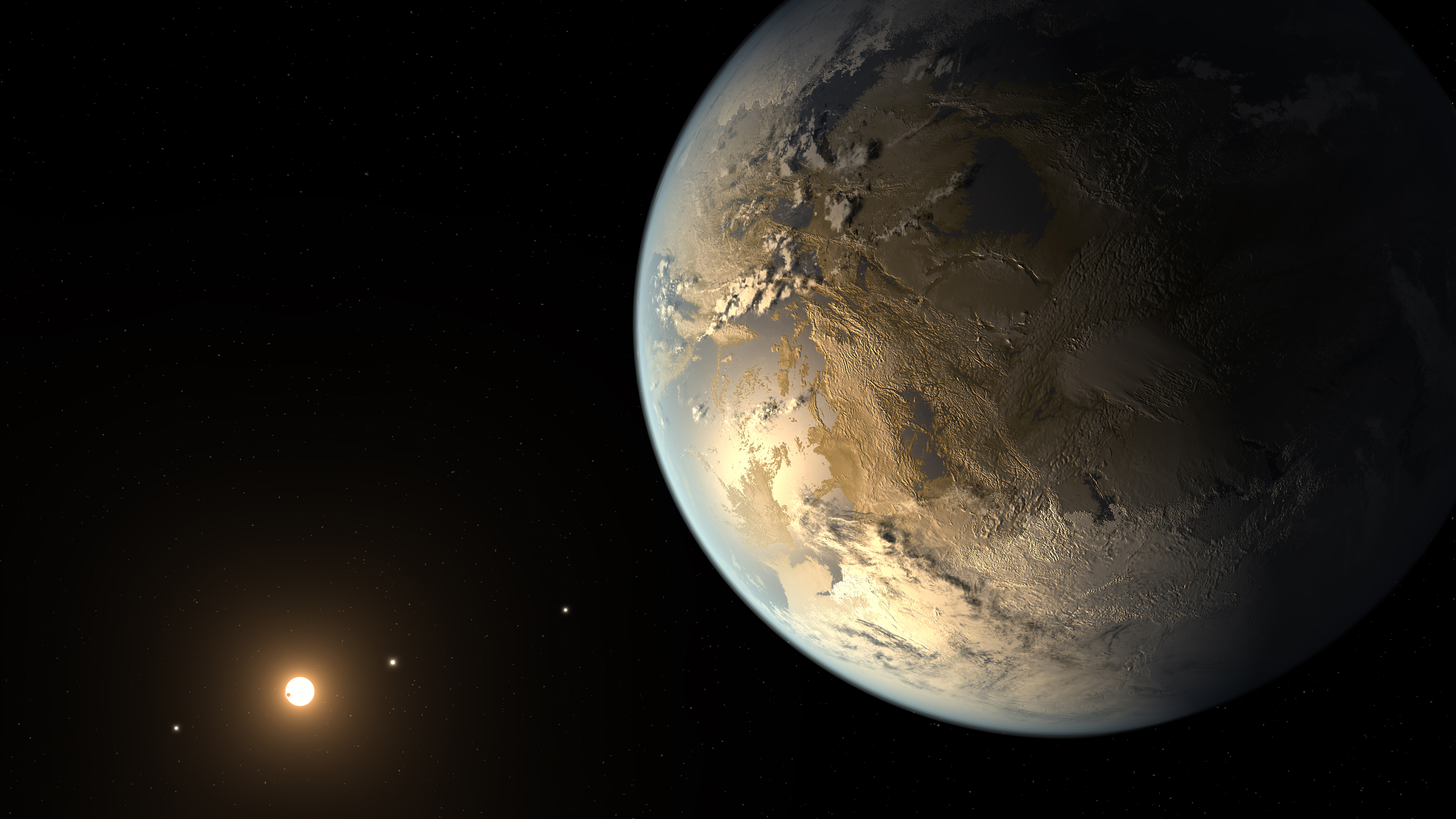

After a century hiatus, astronomy and statistics recently reconnected, giving rise to the new field of astrostatistics. Some of today's most important issues in astronomy require sophisticated statistical modeling. NASA's Kepler mission has detected several thousand planets orbiting other stars, but it was through statistics that astronomers inferred that most stars have planetary systems and hundreds of millions of Earth-like planets probably exist in the galaxy. And in cosmology, statistics refined the parameters of the Lambda Cold Dark Matter (Lambda-CDM) consensus model of the universe, which suggests the universe expanded following a Big Bang 13.7 billion years ago, slowed by dark matter and accelerated by dark energy.

Article continues below

Insights from the ancients

Such insights followed a long gap in the relationship between astrostatistics — a term coined by us in our book of the same title published in 1996 — and the broader field of astronomy.

Astronomy is perhaps the oldest empirical science — quantitative measurements of celestial phenomena were carried out by many ancient civilizations. The geometric models of the Platonists in ancient Greece proposed a cosmological model involving crystalline spheres spinning around a static Earth, a vision that endured in Europe for 15 centuries.

It was another Greek natural philosopher, Hipparchus, who made one of the first applications of mathematical principles that we now consider to be in the realm of statistics. Finding scatter in Babylonian measurements of the length of a year, defined as the time between solstices, Hipparchus made the breakthrough decision to take guidance from the middle of a data range as the best value.

Centuries later, a debate emerged about whether it is better to gather many data points or a few. On one side, the Arabic astronomer Abū Rayḥān al-Bīrūnī argued for more measurements to compensate for the dangers of propagating errors from inaccurate instruments and inattentive observers. In contrast, some medieval scholars advised against gathering repeated measurements, fearing that errors would compound rather than compensate for each other. It was in the 16th century that the utility of the mean to increase precision — a favored method today — was demonstrated with great success by Danish astronomer Tycho Brahe.

In later centuries, some of the great thinkers of the day developed several elements of modern mathematical statistics — specifically to address celestial mechanics, where Newton's Laws of Motion were producing astonishingly precise and self-consistent quantitative results for solar-system phenomena.

In the late 18th century, in order to model cometary orbits, Adrien-Marie Legendre developed a system to fit noisy data to a mathematical model, which is now called the L2 least squares parameter estimation. The least-squares method became an instant success in European astronomy.

In the hands of astronomers, statistics itself was continuing to evolve at that time, as Christiaan Huygens wrote a book on probability in games of chance; Sir Isaac Newton developed a statistical interpolation procedure called the Newton-Raphson Method; Edmond Halley laid foundations of actuarial science; Adolphe Quetelet worked on statistical approaches to social sciences; and Sir George Biddell Airy wrote a volume on the theory of errors.

A parting of ways

But the two fields diverged in the late 19th and early 20th centuries. Astronomy leaped onto the advances of physics — electromagnetism, thermodynamics, quantum mechanics and general relativity — to understand the physical nature of stars, galaxies and the universe as a whole. A subfield called statistical astronomy was still present, but concentrated on rather narrow issues involving star counts and the structure of galaxies.

During that period, statistics concentrated on analytical approaches and found its principle applications in social sciences, biometrical sciences and practical industries, such as manufacturing. The close association of astronomy and statistics had been sundered.

Astronomy at the beginning of the 21st century, particularly research arising from wide-field survey observatories looking at the sky across various wavelengths of light, finds itself challenged as it attempts to take advantage of statistical tools. These surveys can produce petabytes of raw data that may be reduced to terabytes of images or billion-object databases. It is a tremendous challenge to interpret such data through standard statistical approaches — sampling, multivariate and survival analysis (which reveals how the data is less/greater than a certain value but not an actual value), image and spatial analysis, signal processing and time-series analysis, nonlinear regression (modeling functions from noisy data), and others.

The modern field of astrostatistics

The modern field of astrostatistics grew in the 1990s, stimulated by the increasing complexity of astronomical data analysis and interpretation, and by increasing awareness of advances in applied and computational statistics. Cross-disciplinary conferences began to bring astronomers and statisticians together to address statistical challenges in astronomy, astronomical image processing and galaxy clustering — and more recently, those efforts were matched to new textbooks to guide rising astronomers and statisticians.

Our center, the Center for Astrostatistics, was founded in 2003 to confront this diversity of statistical issues. Its mission is to develop and share statistical expertise and toolkits for astronomy and related observational sciences.

The Center for Astrostatistics is a unique organization in its mission to advance statistical methodology in astronomical research, and this is a pivotal moment in the nascent astrostatistics field. Astronomers are moving away from observation of single objects to enormous sky surveys at different wave bands (i.e., radio, infrared, visible, X-ray). In addition, efforts such as the discovery of planets orbiting other stars, require detecting extremely weak or rare signals in complex data sets. And, statistics is guiding progress in understanding the origin of the universe, itself — such as the modeling of faint ripples in cosmic light that suffuses the sky at millimeter wave lengths.

In addition to conducting interdisciplinary research on astrostatistical issues, our center organizes Summer Schools in Statistics for Astronomers for young researchers, international Statistical Challenges in Modern Astronomy meetings for vanguard research, a statistical Web computing service, and two Web sites —http://astrostatistics.psu.edu and http://asaip.psu.edu — with links to recent papers, blogs, job and meeting postings, and other resources.

Astrostatistics comes of age, again

In the astronomy community, astrostatistical methodology papers now are more numerous, summer schools are burgeoning, astrostatistical sessions at general meetings are well-attended, and one of the the largest astronomy projects for this decade — the Large Synoptic Survey Telescope (LSST) — has now formed a formal astrostatistics/astroinformatics collaboration.

Since 2005, the Center for Astrostatistics summer schools have trained more than 650 graduate students and young researchers in intense one-week astrostatistics courses, with additional tutorials held in India, Brazil, Chile, Mexico, China and across Europe. At this pace, the summer schools will train 10 percent of the world's young astronomers, filling a critical need for the U.S. scientific workforce.

Advanced statistical methods are now deeply embedded in the scientific communities involved in space missions, such as the European Planck survey of the cosmic microwave background and NASA's Kepler satellite searching for planets orbiting other stars.

And, statistical and informatics innovations are needed for the Big Data projects of wide-field, multi-epoch sky surveys such as the Catalina Real-Time Transient Survey (CRTS), the Panoramic Survey Telescope & Rapid Response System (Pan-STARRS), the Dark Energy Survey, and the forthcoming LSST.

The scientific insights from these current and future petabyte-scale sky surveys require complex statistical modeling using tools beyond the training of astronomers. Through more collaboration between statisticians and astronomers, as well as increased funding to fertilize these endeavors, such efforts can succeed.

Follow all of the Expert Voices issues and debates — and become part of the discussion — on Facebook, Twitter and Google+. The views expressed are those of the author and do not necessarily reflect the views of the publisher. This version of the article was originally published on Space.com.