ChatGPT on Mars: How AI can help scientists study the Red Planet

"This is not trivial. It is not just a paper. It is about who we really want to become as a species."

The world is abuzz, perhaps even befuddled, about the growing use of artificial intelligence. One of the most popular artificial intelligence (AI) tools available to the public today is ChatGPT, an AI-powered language model that has been "trained" and fed vast amounts of online information. After taking all that in, ChatGPT can regurgitate human-like text responses to a given prompt. It can respond to queries, discuss a lot of topics and crank out pieces of writing.

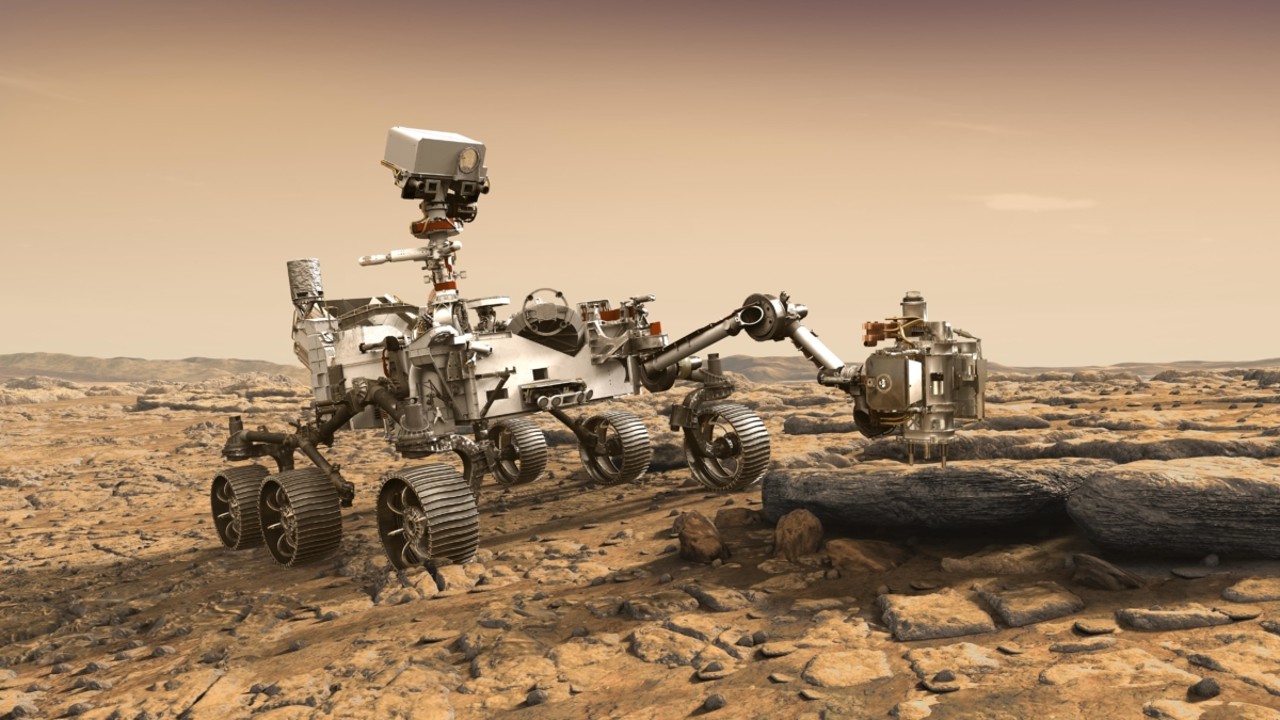

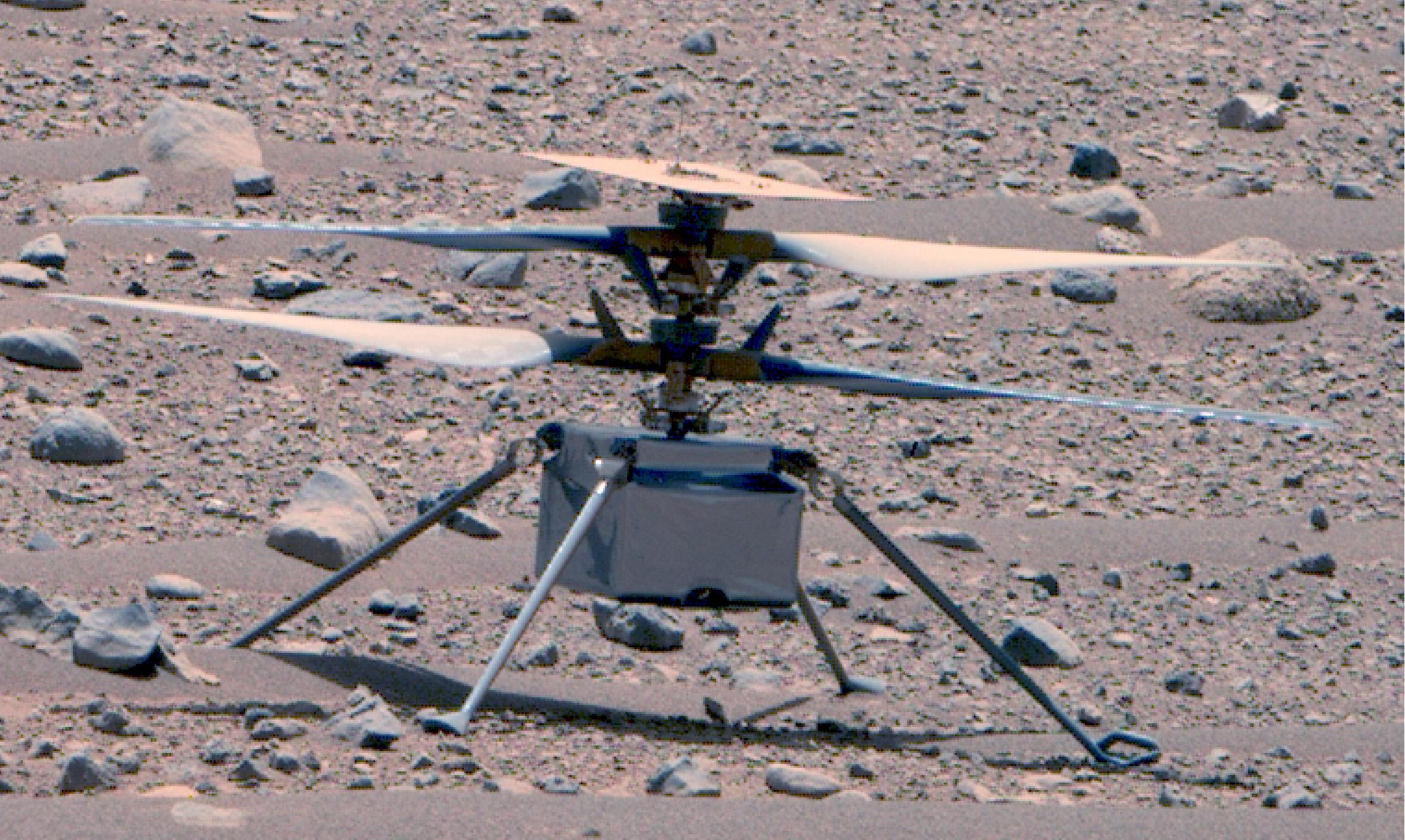

It isn't difficult to imagine a robot wheeling and dealing on the surface of Mars, factory-wired with ChatGPT or a similar artificial intelligence language model. This smartbot could be loaded with a suite of science devices. It could analyze what its scientific instruments are finding "on-the-spot," perhaps even collating any evidence of past life it uncovers nearly instantly.

That data could be digested, assessed, appraised and assembled in some scientific form. The product, in well-paginated condition, with footnotes to boot, could then be transmitted directly from the robot to a scientific journal, like Science or Nature, for publication. Of course, that paper would then be peer reviewed — maybe by AI/ChatGPT reviewers. Sound far-fetched?

I reached out to several leading researchers, presenting this off-Earth, on-Mars scenario, with a variety of reactions in return.

Related: Artificial intelligence could help hunt for life on Mars and other alien worlds

Prone to hallucination

"It could be done but there could be misleading information," said Sercan Ozcan, Reader in Innovation and Technology Management at the University of Portsmouth in the United Kingdom. "ChatGPT is not 100% accurate and it is prone to 'hallucination.'"

Ozcan said he's not sure if ChatGPT would be valuable if there is no prior volume of work for it to analyze and emulate. "I believe humans can still do better work than ChatGPT, even if it is slower," he said.

Breaking space news, the latest updates on rocket launches, skywatching events and more!

His advice is to not use ChatGPT "in areas where we cannot accept any error."

Humans in the loop

Steve Ruff, associate research professor at Arizona State University's School of Earth and Space Exploration in Tempe, Arizona, is keenly tied to studying Mars.

"My immediate reaction is that it's highly unlikely that 'on-the-spot' manuscripts would be a realistic scenario given how the process involves debates among the team over the observations and their interpretation," Ruff said. "I'm skeptical that any AI, trained on existing observations, could be used to confidently interpret new observations without humans in the loop, especially with new instrument datasets that have not been available previously. Every such dataset requires painstaking efforts to sort out."

For the near term, Ruff thinks AI could be used for rover operations, like picking targets to observe without humans in the loop, and for navigation.

First things first

In what world do we want to live?

Perhaps that is the strongest question, said Nathalie Cabrol, Director of the Carl Sagan Center for Research at the SETI Institute in Mountain View, California.

"First things first," Cabrol said. "AI is a formidable tool and should be used as such to support humans in their activity. We actually do that already every day, in one form or another," she added, "and improved versions might make things better."

On the other hand, like any human tools, they are double-edged swords and sometimes lead people to start thinking "nonsense," Cabrol added, and she believes that to be the case here.

"I do personally like writing papers. It is a great time where I see my work coming to fruition and can put my ideas together on paper," Cabrol said, and sees that as an important part of her creative process.

"But let's assume for a moment that I let this algorithm write it for me. Then, I am being told that it's okay because the paper will be reviewed," Cabrol said. "But by whom? I would assume that if you let algorithms do the job for you it's because you assume they will be less biased and do a better job? Following that logic, I would assume that a human is not qualified to review that paper."

Specters of "transhumanism"

Cabrol senses that a next question is: Where do we stop? What if all researchers ask AI to write their research grant proposals? What if they do and don't tell?

"This depends in which world you want to live and what part you want left to humanity," Cabrol said. "We are creative beings and we are not perfect," she continued, "but we learn from our mistakes and that's part of our evolution. Mistake and learning are other words for 'adaptation'," she said.

By letting AI getting into what makes us human, we are messing with our own evolution, Cabrol added, and she sees specters of "transhumanism" in all of this. Transhumanism can be defined as a loose ideological movement united by the belief that the human race can evolve beyond its current physical and mental limitations, especially by means of science and technology.

"Of course, that's not a chip in our brain and that's only a paper, you will say. Unfortunately, it is part of a much broader, and very disturbing, discourse on the (mis) use of AI," Cabrol concluded. "This is not trivial. It is not just a paper. It is about who we really want to become as a species. Personally, I see AI useful as a tool, and I will confine it as that."

Knowledge cutoff

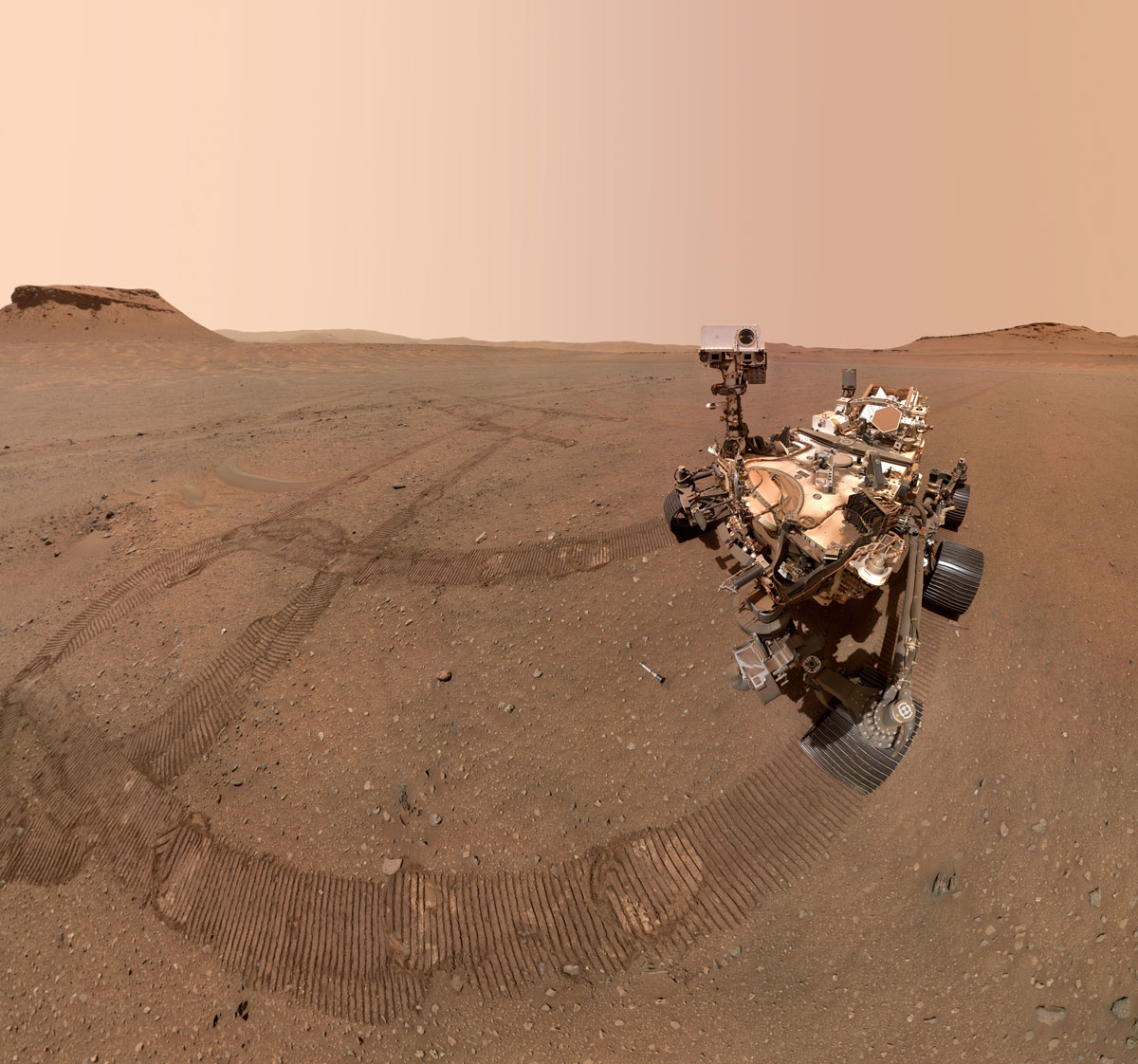

"How funny that we still argue about the definition of life as we know it, and we're starting to use a tool in that search that also stretches the definition of life," said Amy Williams, assistant professor in Geological Sciences at the University of Florida in Gainesville. She is a participating scientist on the NASA Curiosity and Perseverance rover missions that have robots scouting about on Mars.

Williams reacted to the AI-ChatGPT off-world setting in full disclosure mode. "The first time I used ChatGPT was in preparing for this response, asking it: 'What organic molecules have the Mars rovers found?' The question was based on my particular field of expertise," she told Space.com.

"It was illuminating in that it did a great job providing me with statements that I would describe as robust and appropriate for a summary that I could give in an outreach talk to the general public about organic molecules on Mars," said Williams.

But it also demonstrated to Williams its limitation in that it could only access data from, in her case, September 2021 — flagging it as a "knowledge cutoff."

"So its responses did not encompass the full breadth of published results about organics on Mars that I know about since 2021," she said.

Emphasizing that she is not a specialist in AI or machine learning, Williams said that future iterations of ChatGPT + AI will likely be able to incorporate more recent data and generate a complete synthesis of the recent results from any given scientific exploration.

"However, I still see these as tools to use in step with humans, instead of in place of humans," Williams remarked. "Given the limitations in data uplink and downlink with our current Deep Space Network, it is difficult for me to see a way to upload the knowledge base for something as complex as, for example, the current and historic data and context for the sources, sinks, and fates of organic molecules on Mars so that the onboard AI could generate a manuscript for publication," she said.

Putting it into context

Williams views cutting edge planetary research as something that requires "retrospection, introspection and prospection." We push forward the boundaries of science by considering options, she added, that have never before been considered.

"Right now, my experience with ChatGPT showed me it is great at a literature search and turning that information into, effectively, an annotated bibliography. It could certainly save me time in looking up fundamental knowledge. It told me what we already know — and typed it up very nicely! — but it was not anything that any Mars organic geochemistry graduate student couldn't tell me."

In the end, Williams said that while ChatGPT + AI is a powerful tool that can positively augment the process of conveying information and new discoveries, "I don't see it replacing the human-driven process of synthesizing new information and putting it into context to generate new insights into science. However, if every AI sci-fi movie I've seen is predictive of the future, I may be wrong!"

Join our Space Forums to keep talking space on the latest missions, night sky and more! And if you have a news tip, correction or comment, let us know at: community@space.com.

Leonard David is an award-winning space journalist who has been reporting on space activities for more than 50 years. Currently writing as Space.com's Space Insider Columnist among his other projects, Leonard has authored numerous books on space exploration, Mars missions and more, with his latest being "Moon Rush: The New Space Race" published in 2019 by National Geographic. He also wrote "Mars: Our Future on the Red Planet" released in 2016 by National Geographic. Leonard has served as a correspondent for SpaceNews, Scientific American and Aerospace America for the AIAA. He has received many awards, including the first Ordway Award for Sustained Excellence in Spaceflight History in 2015 at the AAS Wernher von Braun Memorial Symposium. You can find out Leonard's latest project at his website and on Twitter.

-

Unclear Engineer There was a story on CBS "60 Minutes" that had their reporter ask an AI program to write a technical papaer on a specified subject. It did, complete with references. But, when the reporter looked up those references, he found that the AI program had made them up - they did not exist. So, AI will lie. The algorithms in that AI were designed to mimic what people say and do, rather than to find information and apply objective logic to pattern recognition.Reply

So, not all AI is really intelligence in the sense of recognizing things that humans have not already recognized. Some of it, at least, is merely a con job.

So, whoever contracts an AI provider to do a task needs to be able to reveiw the product to make sure they are not being conned by an unscrupulous contractor. -

billslugg Here is the deal on references. ChatGPT is "predictive". It will give you the most likely word to appear next in whatever it is writing about. For example, if you ask ChatGPT for a short explantion of Special Relativity along with a reference, it will give you a well written, accurate, two paragraph summary. It will be an average of all that is out there.Reply

For a reference it will give the most likely one, made from scratch, not the most common one of those actually out there. Here is an example with made up items:

Einstein, A. "Special Relativity and the Speed of Light", Physics Abstract Letters, 1905, p1-20

The author is obvious

The title is a likely one

Physics Abstract Letters - because that is where he published most often

1905 because that was the year this came out

20 pages - because that is roughly how long his papers were

1-20 because they always put him at the front of the journal

ChatGPT is doing exactly what it was designed to do. They have "oversold" it in my opinion. What is especially alarming is that it cannot tell true from false. Ask it about UFO's and it will probably come down on the side of the "believers" since they are very vocal. Disbelievers have only images of clear sky. Doesn't get nearly as much press as fuzzy discs. -

Unclear Engineer Yes, ChatGPT is doing what it was designed to do, which is to make it look like something produced by a human. The problem is, it is not really adding any intelligence to the process it uses, and may even be using misinformation it has "leaarned" because that is what it sees on the Internet about a particular subject.Reply

Using the same process to "predict" what a reference should look like is the fatal flaw in the ChatGPT process algorithms. It is producing fake references to simply simulate what real references would look like to somebody who does not actually check.

So, it is not really intelligence . It is simply a tool for faking intelligence.

If I ever get around to testing it, I will ask it to provide 3 papers on the same controversial subject, one written from each of the opposing perspectives, and one where it is instructed to be "objective". The first 2 products should look like the typical political propaganda that we are already being blasted with every day. The 3rd should be interesting. I expect it will only do what some websites like Allsides.com already does, which is to provide stories independently from both perspectives, without any actual analysis of where those sides are being factually misleading when viewed in a broad perspective that draws in other information.

So, I exepct Chat GPT will get used a lot by political activists to shorten the time that they need to concoct their messages to the masses. And I expect them to try to argue that those messages are "right" because they were produced by AI. But, they were really not produced by artificial inteligence, they are only the product of automated prediction of what people expect to hear because that is what they are already saying. I don't see where that has any use for truly educating us, but I do see that it has a lot of uses for misleading us. -

billslugg There must be good uses for such an algorithm. I just saw a poem written in words starting with each letter of the alphabet in order. Not a particularly useful thing but immpressive none the less. What other things are out there?Reply -

Unclear Engineer The real scientific uses of AI are in learing to recognize complicated relationships if large amounts of complex data. It is still a limited trait, but it can deal with larger amounts of data and more complex interactions within that data than humans can usually muster the time and attention to notice. Not to mention that it can do it faster, once humans have provided the "training" on what to look for.Reply

But, if what we are looking for is actual intelligence, where we notice that something is not consistent with expectations (or political rhetoric), then we are not "there yet" with AI.