Radiation from Nearby Galaxies Bulked Up Early Monster Black Holes

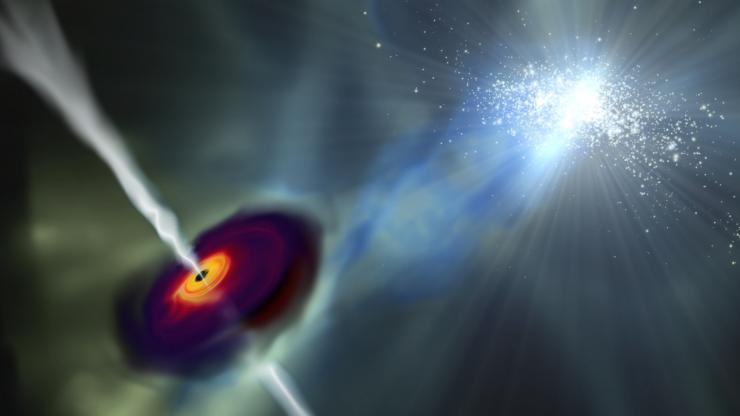

Bright radiation emitted by neighboring galaxies likely fueled the rapid growth of supermassive black holes in the early universe, a new study shows.

Ancient black holes range in size from millions to billions of solar masses and date back to as early as when the universe was less than 1 billion years old. Their early existence has puzzled scientists, as these cosmic beasts are thought to form over billions of years.

Supermassive black holes can be found at the center of most, if not all, large galaxies, including the Milky Way. Using computer simulations, an international team of scientists found that the behemoths can grow rapidly after the bright radiation of a nearby galaxy heats up their host galaxy, which halts star formation. [The Strangest Black Holes in the Universe]

Article continues belowFor the most part, molecular hydrogen cooled the tumultuous hydrogen and helium plasma of the early universe, allowing stars and galaxies to form. However, when a heat source, such as the radiation of a nearby galaxy, destroys most of the molecular hydrogen, the gas within the galaxy can’t cool as efficiently and, as a result, stars can't form. The leftover gas, dust and unborn star material would have then eventually collapsed into a supermassive black hole, John Wise, co-author of the study and a physicist at the Georgia Institute of Technology, told Space.com.

While supermassive black holes are known to form following the death of massive stars — an event also known as a supernova — this process is believed to last over billions of years. The new study, however, helps to explain how many of these behemoths formed so quickly in the early universe.

"The collapse of the galaxy and the formation of a million-solar-mass black hole takes 100,000 years — a blip in cosmic time," Zoltan Haiman, an astronomy professor at Columbia University and another co-author of the study, said in a statement from Georgia Tech. "A few hundred million years later [in the simulation], it has grown into a billion-solar-mass supermassive black hole. This is much faster than we expected."

Originally, scientists thought that the nearby galaxy in this scenario "would have to be at least 100 million times more massive than our sun to emit enough radiation to stop star formation," the researchers said in the statement. However, the new simulation suggests that the neighboring galaxy could be smaller and closer than expected. In fact, the "Goldilocks" neighboring galaxy can't be too hot or too cold, Wise said.

"If the nearby galaxy were too close (too hot), then the radiation would start to 'evaporate' the gas cloud that is collapsing to form a massive black hole," Wise told Space.com. On the other hand, "if the nearby galaxy were too far (too cold), then the radiation would not be strong enough to destroy enough molecular hydrogen," and the gas and dust would be able to cool and form stars rather than a massive black hole, he added.

Using NASA's James Webb Space Telescope, which is expected to launch in 2018, the researchers plan to further study the collapse of such massive black holes and "what type of galaxy forms around one of these massive black hole seeds," Wise said.

"Understanding how supermassive black holes form tells us how galaxies, including our own, form and evolve and, ultimately, tells us more about the universe in which we live," John Regan, lead author of the study and a postdoctoral researcher at Dublin City University, said in the statement from Georgia Tech.

Their recent findings were detailed March 13 in the journal Nature Astronomy.

Follow Samantha Mathewson @Sam_Ashley13. Follow us @Spacedotcom, Facebook and Google+. Original article on Space.com.

Samantha Mathewson joined Space.com as an intern in the summer of 2016. She received a B.A. in Journalism and Environmental Science at the University of New Haven, in Connecticut. Previously, her work has been published in Nature World News. When not writing or reading about science, Samantha enjoys traveling to new places and taking photos! You can follow her on Twitter @Sam_Ashley13.