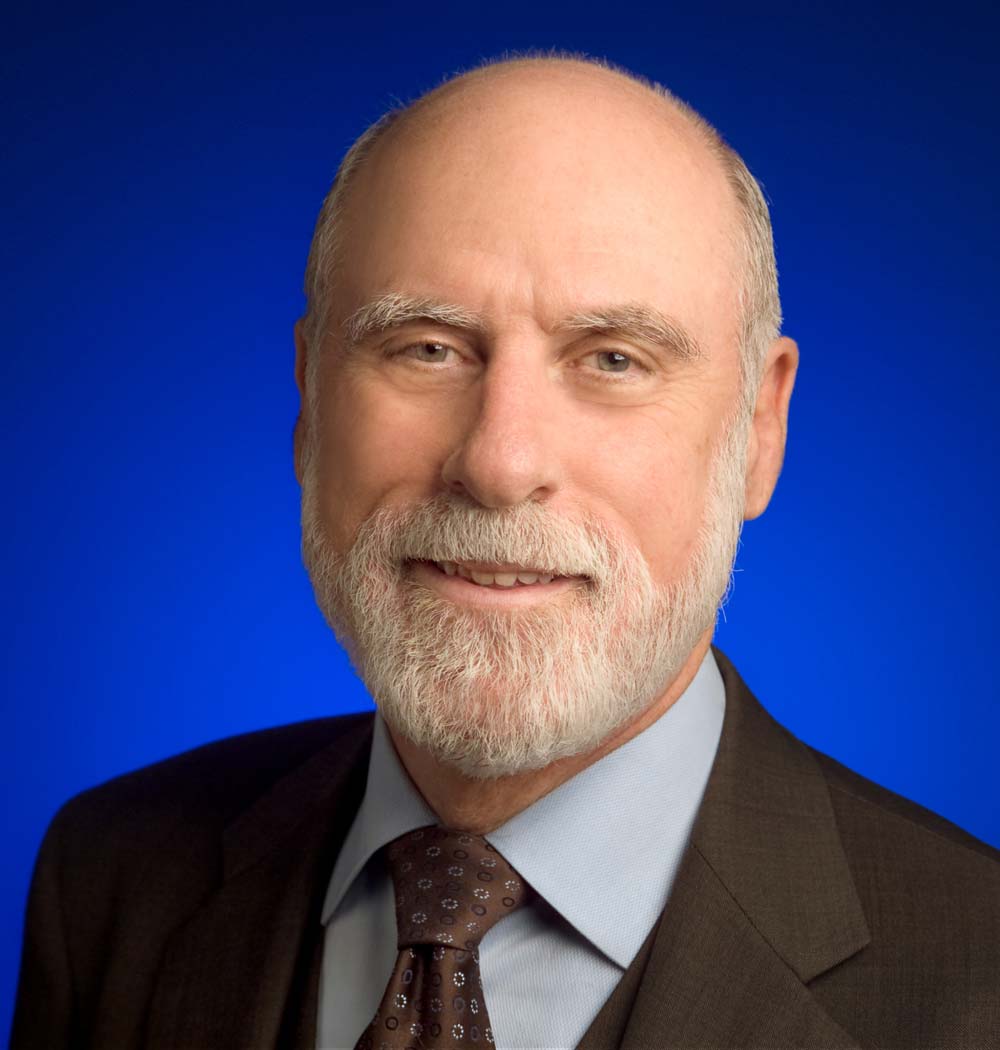

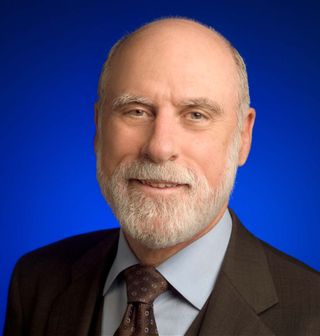

Do Not Fear Failure, The Lessons are Important (Op-Ed)

Vinton G. Cerf is the vice president and chief Internet evangelist for Google. Cerf is the co-designer of the TCP/IP protocols and the architecture of the Internet. He has served in executive positions at MCI, the Corporation for National Research Initiatives and the U.S. Defense Advanced Research Projects Agency and on the faculty of Stanford University. U.S. President Barack Obama appointed Cerf to the National Science Board in 2012. Cerf is a recipient of numerous awards and commendations, including the U.S. Presidential Medal of Freedom, the U.S. National Medal of Technology, the Queen Elizabeth Prize for Engineering, and the Charles Stark Draper award. Cerf contributed this article to Space.com's Expert Voices: Op-Ed & Insights.

Serious science demands that we accept, and even embrace, the possibility of failure. Our theories may be wrong or incomplete. Our experiments may be flawed, or our calculations may have mistakes. We must especially avoid expectation bias: deliberately, or even unconsciously, filtering data to match our predictions, rejecting "outliers" as if they had nothing to teach us.

Experimental science and theoretical science are two sides of the same coin. A theoretician produces models and predictions, and an experimentalist tries to validate them (or not!). Sometimes, experiments fail to produce the anticipated results, and if all other explanations are exhausted, we may have to accept the Holmesian (i.e., Sherlock Holmes) conclusion that the theory is flawed and the truth lies elsewhere. A good scientist must be prepared to revise theories when they don't produce reliable predictions. On the other side, some experiments don't work, not because the theory is wrong but because the measurement proves infeasible.

Science is neither quick nor easy

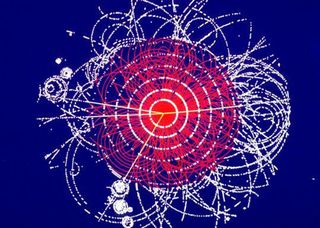

Consider the Higgs boson particle, which emerged from theories stretching back to the 1960s, but it couldn't be confirmed until 2012, when instruments reached the energy levels needed to verify its existence. In this case, instrumentation had to catch up with theory. A similar story can be told about gravitational waves that were predicted by Albert Einstein and then were rejected, accepted and rejected again several times. It took 100 years for a credible measurement of this phenomenon to be reliably achieved and the effect demonstrated. These two examples illustrate the profound way in which engineering and science interact and reinforce each other.

But what of failure? Surely we do not celebrate it! Who wants to fail? And yet, failure is often our most effective teacher. Every experiment is a risk. The experimental setup may not work. The theory may be proven wrong. We take those risks because they are the fastest way to discover truth. The same can be said of so many other things. When we start a company, it's a risk — sometimes a very big one, especially if we have borrowed other people's money to start it or have launched a business that has no precedent and no initial market. Some people might use the word "gamble," but I think that applies only if little or no thought has gone into the product, service or business model that is expected to drive the company toward revenue, and, ultimately, profit, if that is the goal.

Do not fear failure

The Silicon Valley story teaches many lessons. One of them is that failure is not fatal. Indeed, the statistics are such that a high percentage of new companies fail. Entrepreneurs are risk takers. They drive themselves, their partners and their employees hard (not to say, crazy). They don't give up — at least not easily. If the business fails because of a lack of market growth, a lack of capital or an inability to find the necessary talent, it picks itself up and starts again. Ask any successful entrepreneur, and you are likely to find a few failures or near failures in his or her history.

What is vitally important is to learn to take advantage of failure. The first rule of failure is to find out why it happened. Here, brutal honesty is vital. Blindly blaming others teaches nothing. To be sure, someone else may have messed up and caused the failure, but don't forget to look in the mirror. The fatal flight of the Challenger space shuttle in 1986 met with disaster caused by the failure of an O-ring in a launch in too low an ambient temperature. Subsequent investigation showed that the design of the solid rocket boosters had a flaw that surfaced in low temperatures and was known to be a potential catastrophic hazard. The story of this tragedy has been used to illustrate not only the critical role of data integrity in engineering but the demand for ethical integrity as well.

Get the Space.com Newsletter

Breaking space news, the latest updates on rocket launches, skywatching events and more!

The critics can stand down

There is another side to this story: Conventional wisdom about failure may be dead wrong. I have met five Nobel Prize winners in the past year, and every one of them had a similar story: A measurement they took or a design they undertook was considered wrong or unworkable. "Experiment error!" "Violates the laws of physics!" cried the critics. And yet, sometimes decades later, these Nobelists were ultimately recognized for their breakthrough results.

Dan Shechtman's discovery of quasi-crystals illustrates this phenomenon beautifully. Shechtman received the Nobel Prize for his work in 2011 based on discoveries made and widely rejected by his contemporaries in 1982. From the Nobel Prize press release:

"On the morning of 8 April 1982, an image counter to the laws of nature appeared in Dan Shechtman's electron microscope. In all solid matter, atoms were believed to be packed inside crystals in symmetrical patterns that were repeated periodically over and over again. For scientists, this repetition was required in order to obtain a crystal. … Shechtman's image, however, showed that the atoms in his crystal were packed in a pattern that could not be repeated. Such a pattern was considered just as impossible as creating a football using only six-cornered polygons, when a sphere needs both five- and six-cornered polygons. His discovery was extremely controversial. In the course of defending his findings, he was asked to leave his research group. However, his battle eventually forced scientists to reconsider their conception of the very nature of matter."

While we may not welcome failure, we must anticipate it, and be prepared to learn the tough lessons it seeks to teach. Any other stance denies us the potential to succeed in the face of all odds.

Follow all of the Expert Voices issues and debates — and become part of the discussion — on Facebook, Twitter and Google+. The views expressed are those of the author and do not necessarily reflect the views of the publisher. This version of the article was originally published on Space.com.

Join our Space Forums to keep talking space on the latest missions, night sky and more! And if you have a news tip, correction or comment, let us know at: community@space.com.